Documentation Index

Fetch the complete documentation index at: https://developer.kodexa.ai/llms.txt

Use this file to discover all available pages before exploring further.

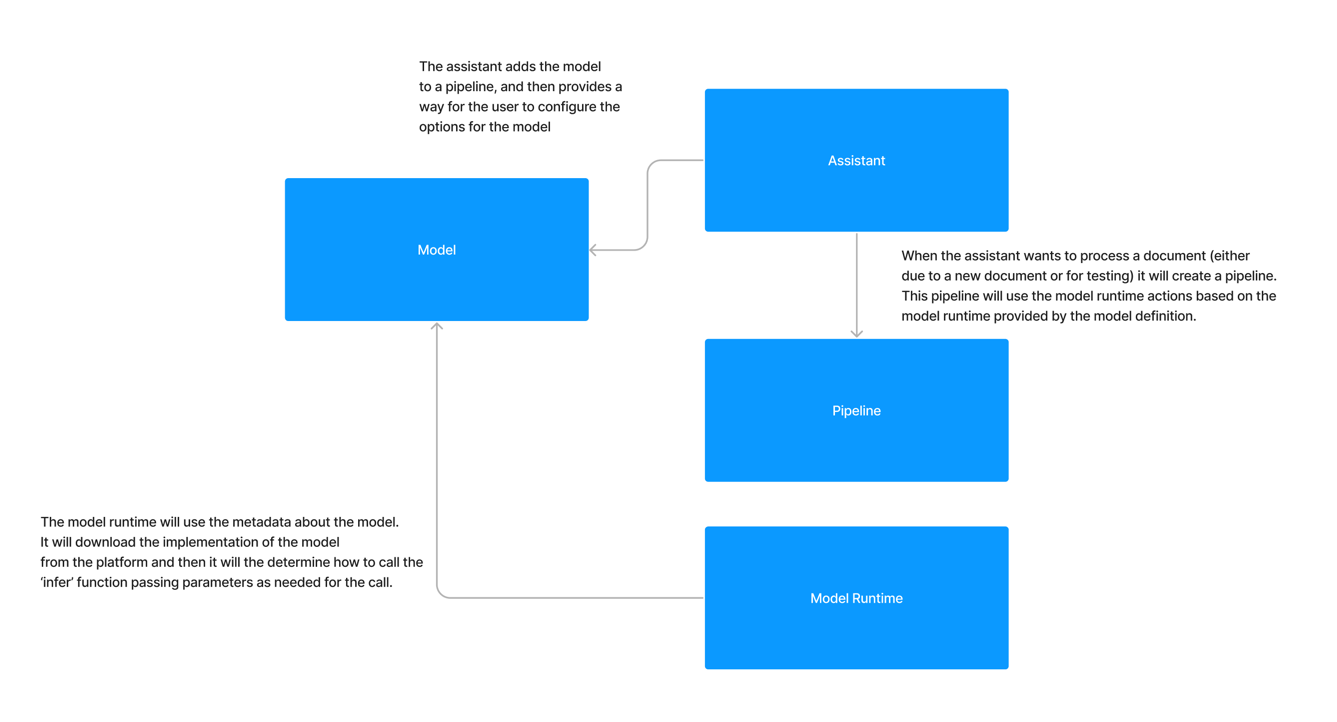

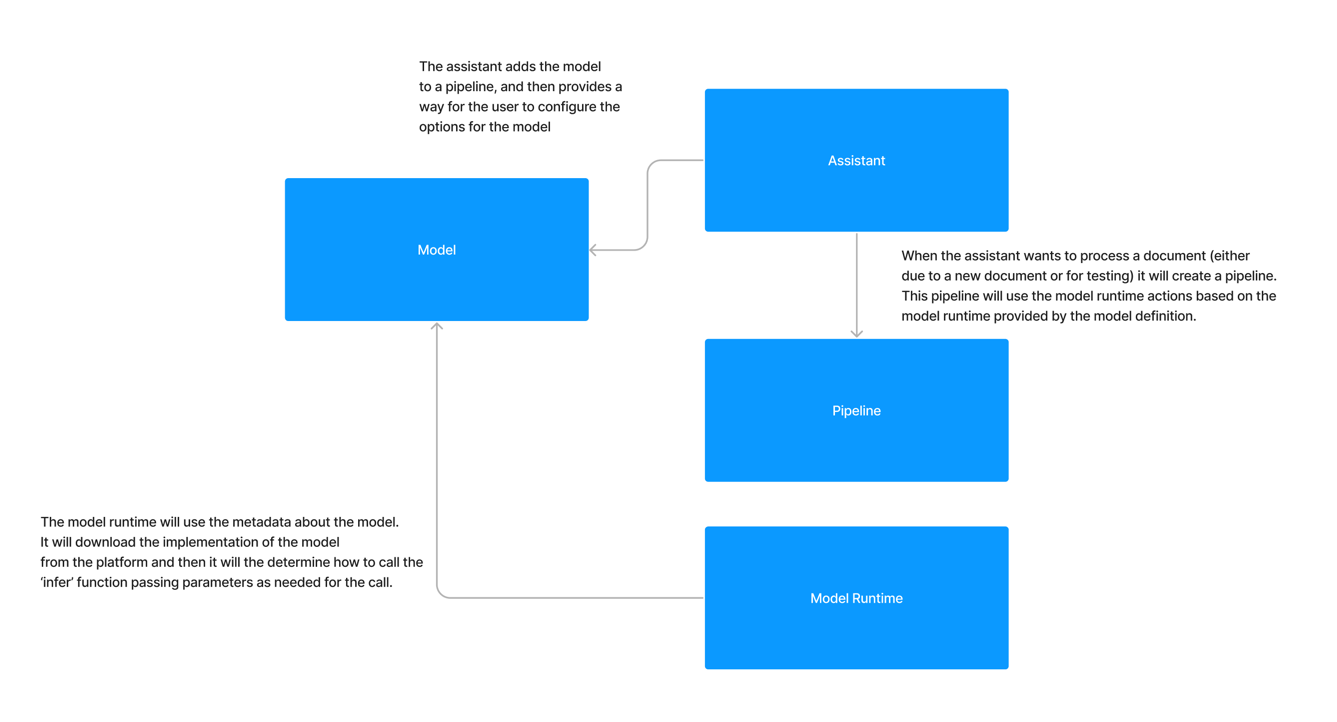

Module runtimes provide the execution environment for Kodexa modules. They package the Linux and Python dependencies needed to run your code consistently across Lambda and Kubernetes-based execution.

The runtime is responsible for downloading your module, importing the correct package, injecting execution context, and returning the processed document back to the platform. In practice, the runtime defines the contract between your module code and Kodexa.

How do Modules interact with the Module Runtime?

When you deploy a module into Kodexa you include the module runtime that you want to use. Today, all Kodexa module runtimes have the same interface, but this may change in the future. How the module runtime calls your module is based on how you have declared your module in the module.yml file.

Inference

The most common starting point with working with a module is learning how inference works. Let’s take a simple example of a module.yml:

# A very simple first module

slug: my-module

version: 1.0.0

orgSlug: kodexa

type: store

storeType: MODEL

name: My Module

metadata:

moduleRuntimeRef: kodexa/base-module-runtime

type: module

contents:

- module/*

moduleRuntimeRef, which is set to kodexa/base-module-runtime. The platform resolves that runtime, provisions the correct execution environment, and uses it to run the module during assistant executions.

When the module runtime is called, it receives the document plus the inference options configured for that step. By convention most modules package their Python code under

When the module runtime is called, it receives the document plus the inference options configured for that step. By convention most modules package their Python code under module/, and the runtime calls infer from that package. If your archive contains multiple Python packages, the runtime can be told which package to import by setting metadata.moduleRuntimeParameters.module.

The module runtime will pass the document that we are processing to the module and then the module will return a document. The module runtime will then pass the document back to the platform for further processing.

Inference with Options

In the previous example, we saw how the module runtime would pass the document to the module. In this example, we will see how the module runtime will pass options to the module.

First, let’s add some inference options to our module.yml file:

# A very simple first module

slug: my-module

version: 1.0.0

orgSlug: kodexa

type: store

storeType: MODEL

name: My Module

metadata:

moduleRuntimeRef: kodexa/base-module-runtime

type: module

inferenceOptions:

- name: my_option

type: string

default: "Hello World"

description: "A simple option"

contents:

- module/*

import logging

logger = logging.getLogger(__name__)

def infer(document, my_option):

logger.info(f"Hello from the module, the option is {my_option}")

return document

Targeting a Specific Package

If your module ZIP contains more than one Python package, set metadata.moduleRuntimeParameters.module so the bridge imports the correct package:

metadata:

moduleRuntimeParameters:

module: my_module

infer by default. For event-aware modules it falls back to handle_event when that function is present.

Magic Parameter Injection

When a module function is called by the Kodexa bridge, parameters are automatically injected based on the function signature. You only need to declare the parameters you want — the bridge inspects your function signature and passes matching values automatically.

Available Parameters

| Parameter | Type | Description |

|---|

document | Document | The Kodexa document being processed (inference only) |

model_base | str | Path to the model’s base directory on disk |

pipeline_context | PipelineContext | The pipeline execution context |

module_ref | str | Reference to the module being executed |

module_options | dict | Module-level configuration options |

assistant | Assistant | The assistant associated with this execution |

assistant_id | str | The assistant ID |

project | Project | The project this execution belongs to |

execution_id | str | The current execution ID |

status_reporter | StatusReporter | Helper for posting live status updates to the UI |

pipeline_context is the main entry point for execution metadata. It exposes:

pipeline_context.document_familypipeline_context.content_objectpipeline_context.document_storepipeline_context.context for the raw event/context payload

Usage

Declare only the parameters your function needs:

def infer(document, pipeline_context=None, model_base=None):

# Only document, pipeline_context, and model_base are injected

...

Inference Options

In addition to the magic parameters above, any inference options declared in your module.yml are also injected by name. If you have an inference option called my_option then you will get a parameter called my_option passed to your inference function.

def infer(document, my_option):

logger.info(f"Hello from the module, the option is {my_option}")

return document

StatusReporter

The status_reporter parameter provides fire-and-forget status updates that appear in the UI during execution. All calls are safe — errors are logged but never propagated.

status_reporter.update(title, subtitle=None, status_type="processing")

| Argument | Required | Description |

|---|

title | Yes | Primary status message |

subtitle | No | Secondary detail text |

status_type | No | One of: thinking, searching, planning, reviewing, processing, analyzing, writing, waiting |

def infer(document, status_reporter=None, model_base=None):

if status_reporter:

status_reporter.update("Extracting tables", status_type="processing")

# ... do work ...

if status_reporter:

status_reporter.update("Running classification",

subtitle="Page 3 of 12",

status_type="analyzing")

# ... more work ...

return document

Available Runtimes

Kodexa provides several built-in module runtimes for different processing needs:

| Runtime | Slug | Description |

|---|

| Base Module Runtime | kodexa/base-module-runtime | Standard Python runtime for custom modules |

| Go Scripting Runtime | kodexa/go-scripting-runtime | Lightweight runtime for inline JavaScript modules |

| Cloud Model Runtime | kodexa/cloud-model-runtime | Runtime for cloud-hosted AI models |

| Excel Runtime | kodexa/excel-runtime | Excel/spreadsheet document processing |

| Azure Runtime | kodexa/azure-runtime | Azure Form Recognizer integration |

| Textract Runtime | kodexa/textract-runtime | AWS Textract integration |

| Google Runtime | kodexa/google-runtime | Google Document AI integration |

| UNO Runtime | kodexa/uno-runtime | Office document conversion via LibreOffice/UNO (Word, Excel, PowerPoint) |

| Agent Model Runtime | kodexa/agent-model-runtime | Runtime for agentic AI processing |

Pipeline Context Status Handler

For step-level progress tracking (progress bars in the UI), use the pipeline_context.status_handler callback:

def infer(document, pipeline_context=None):

pages = document.get_nodes()

for i, page in enumerate(pages):

pipeline_context.status_handler(

f"Processing page {i+1}", # message

i + 1, # progress

len(pages) # progress_max

)

# ... process page ...

return document